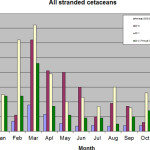

There have been a number of posts at Deep Sea News lately that have attracted intense commentary and a lot of back-channel communication, some of which has been nice, and some, well, not so much. We encourage reasoned discussion and debate around here, of course, and can have a good laugh about the critics, but a significant chunk of the comments and communications don’t really fit in either category. Rather, they have some things in common with past discussions here and elsewhere that make them, to me at least, worthy of discussion in and of themselves. I’m not talking about the blatant trolling, conspiracy theorists and ad hominem attacks – those are easily dealt with – rather, I mean the comments that seem valid (their authors probably genuinely believe them) until you apply some basic reason or logic. Below is a list of examples of recent marine disasters that have prompted vigorous debates here on Deep Sea News.

- Fukushima radiation leak

- Starfish wasting disease

- the Deepwater Horizon oil spill

- California oarfish “mortality event”

- Hurricane/Superstorm Sandy

- the “great Pacific garbage patch”

- the current Atlantic dolphin UME

- the collapse of various fisheries, exemplified by the Long Island Sound lobster fishery

So what’s wrong with debating these fascinating topics? Well nothing, as long as the discussions are based on reason, information and, frankly, reality. Also nothing, as long as there actually IS a debate, which isn’t always the case. Here are some examples of the sort of reasoning that we have seen in comments, emails and tweets about the above examples:

- Starfish wasting disease. Starfish are melting. Radiation leaked into the ocean at Fukushima. Therefore Fukushima caused the starfish melting.

- Hurricane/Superstorm Sandy. Hurricane Sandy happened. Then dolphins began dying on the Atlantic coast. Therefore Sandy caused the Atlantic dolphin UME.

- The “great Pacific garbage patch”. There’s a giant patch of garbage out there. If we could just sort of scoop it up, that would be good. Someone should invent something to do that.

- The Long Island Sound lobster fishery. “They” sprayed insecticides in the tri-state area to control mosquito populations. Around the same time, lobsters died. Therefore insecticide spraying killed lobsters.

Among these sorts of comments and communications dwell many types of formal and informal logical fallacies, that is, flawed reasoning. A common one is Arguing from Ignorance which is not meant as an insult, but is defined as “assuming that a claim is true because it has not been proven false”; this can be seen as a facet of Arguing from Silence, where a cause is assumed based on absence of evidence. For example, the LIS lobster fishers made an erroneous connection between insecticide spraying and lobster mortality back in 1999 because there wasn’t another explanation at the time and it seemed reasonable (to them). A big problem is that you can make this sort of logical fallacy in the blink of an eye – it’s basically intellectual laziness – whereas the sorts of controlled and rigorous studies required to build a good theory for any environmental disaster can take a really long time. In other words, fallacy is instantaneous but truth works at the speed of science, which is, unfortunately, often pretty gastropodal. Not enough time has yet elapsed to reveal the true cause(s) of the starfish melting syndrome, for example, but in the case of the LIS lobsters, science showed pretty unequivocally that the mortality resulted from a multifactorial suite of environmental problems, particularly chronically elevated temperatures and persistent hypoxia, probably exacerbated by some fishery-related factors. Pesticides didn’t enter into it. And yet, if you ask the average Joe on the street in Hicksville, they are more than likely to say that the pesticides killed the lobsters in ’99, because that message – wrong as it was – was widely disseminated in the heat of the crisis, whereas the truth came out quietly in scientific papers and agency reports years later when the crash had long since faded from The News.

A related problem is that in the time between when people first propose a fallacious cause, and when the true cause is revealed through reason and research, the fallacious one can become ingrained like an Alabama tick. Once people get an idea in their head, even if it’s wrong, getting them to let go of it can be bloody hard. Indeed, there’s a term for this; it’s called “the Backfire Effect”: when confronting someone with data contrary to their position in an argument, counter-intuitively results in their digging their heels in even more. In this phenomenon, the media has to accept a sizable chunk of responsibility because, as the lobster example shows, the deadline-driven world of media agencies is more aligned with the rapid pace of the logical fallacy than with the slow and deliberate pace of scientific research. Many media outlets are often quite happy to give airtime to ideas that haven’t yet been critically evaluated, especially if there isn’t much other information to report (yet) about a given crisis. I’m pretty sure some folks I know are going to totally jump down my throat for saying that. They will doubtless point out that journalists are the defenders of the One Truth, but this is my editorial soapbox, so go ahead fellas. Besides, John Stewart calls CNN out for this sort of stuff practically every night, and if it’s good enough for him…

Perhaps the most common flawed thinking we see in the comments and back channels of #DeepSN is the false correlation, or causality inferred from coincidence; formally, this is called post hoc ergo propter hoc. This was the fallacy that Jenny McCarthy committed when she decided that the MMR vaccine had caused her sons autism, simply because the latter followed the former closely in time. Well yeah, 100% of car accident victims ate breakfast that day too, but you don’t see people ditching their cheerios do you? McCarthy’s willingness to shout her ignorance from the rooftops (and Oprah’s couch) has done untold damage to the public health, especially as it now turns out that her son didn’t have autism anyway. The point is, logically flawed thinking of this kind is not trivial or a private deficiency; it can cause real harm to the thinker and to others. The recent kerfuffle on Chris Mah’s excellent post debunking a link between the Fukushima disaster and the starfish melting syndrome on the US Pacific coast is a perfect example of post hoc thinking. Fukushima happened -> Starfish wasting happened -> therefore Fukushima caused starfish wasting. As Chris pointed out, though, starfish wasting started before the Fukushima event, so even before any research has been done on the true cause of the syndrome, we can comfortably discount Fukushima radiation as the primary contributor. If, as an academic exercise you apply post hoc thinking in light of Chris’s point, flipping the first two premises in the above syllogism, you could just as easily argue that Starfish wasting caused the Fukushima event! That’s obviously absurd on its face and just serves to reveal the fallacy for what it is. It may be more plausible that the radiation caused the starfish melting rather than the other way around, but that doesn’t make it any less fallacious. Another aspect of the post hoc phenomenon is that it doesn’t seem to happen in the good direction, only the bad. Fukushima must have caused the starfish melting syndrome, but no one is jumping up and down saying that the record numbers of whales in California waters this year are a pleasant and unexpected side effect of Fukushima, even though it’s happening at the exact same time as the starfish problem.

One last example of flawed thinking that inhibits reasoned debate about ocean science issues is false pattern recognition, or simply “leaping to conclusions”. The 2013 case of “oarfish mortality” is a great example. Last year precisely two oarfish washed up in California, within a couple of weeks of each other. Oarfish are rare, so when two of them washed up in quick succession, many folks were quick to assume that the two events were related and that we were at the start of an oarfish mortality event. Of course, it was just a statistical anomaly; a rare event that nonetheless happens inevitably if you wait long enough. TV and radio media are some of the worst offenders when it comes to leaping to conclusions this way (print media outlets tend to be a bit more rigorous). One of the ways they justify this is through posing a question. Rather than framing the piece as “Oarfish mass mortality underway”, which would require fact checking, they go with “Are we at the start of an oarfish mortality event?” and support it with a few quotes from bystanders asked to wax hypothetical about their experiences. By framing the story as a question or hypothetical in this way, journalists abdicate somewhat the responsibility to substantiate the claims made. It may appear to editors to be a harmless practice that stimulates conversation around an interesting topic, but it often causes a significant amount of work for those who make it their business to try to inject a bit of science into the public conversation. This is especially the case when the truth (statistical anomaly) is a lot less interesting than the alternative, that 30ft oarfish are going to start washing up all over the place!

There are a whole slew of other related phenomena collectively called “cognitive biases” (of which the Backfire Effect is one example), that come into play during heated debates about events like Sandy, Deepwater Horizon and Fukushima. I am not even going to scratch the surface on those here, because this post is long enough already and we hope to have some experts on these phenomena comment here soon. In the meantime, perhaps one way we can help move the conversations in more helpful directions would be a checklist that people can consult to check their logic. After all, awareness of a problem is half the solution, amIright? Scientists often have some form of this kind of thinking ingrained as a part of their training, but not always, so it can’t hurt for all of us to think consciously about our thinking, me included. To that end, I offer the following, non-comprehensive list of things to consider before you hit “Reply” on that cleverly crafted response. If you have additional suggestions I invite you to add them in the comments.

- Am I seeing a pattern that could just be a statistical rarity, and leaping to a conclusion?

- Am I connecting two events causally, because they occurred close together in space or time?

- Am I inferring a cause in the absence of evidence for any other explanation?

- Am I thinking inductively “It must have been such and such…”

- Am I framing the issue as a false dichotomy (debating only two possible causes, when there may be many others). In other words, am I framing the issue as an argument with two sides, rather than a lively discussion about complex issues?

- Am I attacking my “opponent” and/or his/her credentials, rather than his/her argument?

- Am I arguing something simply because other/many people believe it to be true?

- Am I ignoring data because I don’t want to lose face by conceding that I may be wrong?

- Am I cherry picking data that support my position (a cognitive bias)

Deep Sea News seeks to raise awareness through scrutiny, not negativity. By that we mean that we try our best to stick to the facts and then deliver them in our usual style of “reverent irreverence“. For those who favour the ad hominem attack: we’re not paid to blog and we don’t all work at the same place (in fact, we all work at different places, all educational or non-profit). We’re just 7 scientists who love what we do and want to share that passion with everyone else. We relish vigorous discussion about the subject we all love, marine science, so with a bit of luck and a bit of effort, I hope we can improve the conversation by keeping it reasoned and scientific, so that DSN stays fun and informative, and doesn’t become a hive for trolls and a battlefield for flame wars.

I’d like to stat by saying I love you.

I admire your attempts to educate the masses, it is a goal of mine as well. It is a thankless job,

There will always be people who are ruled by faulty logic, and the only thing we can do is try to educate a few people so there will be a voice of reason, however small.

Great article, one issue though – I don’t eat breakfast, does that mean I am immune from car accidents? :p

Yep, and axe murderers too. Lucky you!

Thanks for this. I work in climate change, but the flawed thinking runs along the same lines. Hope you don’t mind if I pass along some of your observations to my colleagues…

Hicksville: It’s a place! On Long Island!

https://maps.google.com/maps?q=Hicksville,+NY&hl=en&sll=42.746632,-75.770041&sspn=7.397022,10.360107&oq=hicks&t=h&hnear=Hicksville,+Nassau,+New+York&z=14

Yeah, where did you think I meant? Some fictional place that people refer to when they mean the middle of nowhere? I would never…

Great article. I hope your website continues to be a space of interesting, well-supported articles with rational discussion which can extend the learning space. And that people can bring their passion without their aggression to those discussions.

All very good points. Much (but not all) of what gets posted on the internet and served to the general public by the many media outlets can (and should) be carefully scrutinized by readers. We must not forget that all paid media exists either to produce a profit for someone or to advance a political or social agenda. Because of this, and as history shows us, many times the “facts” of the stories are presented in a somewhat less than scientific way. Consequently, very unscientific conclusions are often offered based on reports and opinions that have not been properly vetted. Unfortunately, many people still live by the idea that “if it’s on the internet or on television, it must be true.” It seems that many of the popular media outlets operate to a higher goal than exposing “the One Truth…” That one higher goal is to gain a larger viewership (at all costs). Higher viewership translates to greater sales/sponsorship which is directly related to “more money.” Thankfully, most peer reviewed scientific journals do not operate under pure profit motive. Sadly, the general populace appears to have neither the savvy nor the desire to access and read these (more reliable) resources. But then this is just my humble opinion… :)