Recently, I wrote about research findings of warming of the deep sea drastically within the last decade. Specifically, I mentioned how the research team used models to discover this.

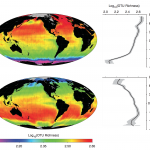

New research has found this missing energy in the deep oceans. The findings rise from a model (ORAS4) tested against and based on temperature and salinity data from 1958-2009, at least a dozen different data types, and all over the worlds oceans. This is massive undertaking and represents our best estimates of changes in the ocean’s heat, i.e. thermal energy, over the last half century.

A website called Junk Science went after the idea that this was just a model and not reality.

This is junk science because…

… “the findings rise from a model (ORAS4).” Model predictions should be verified by reality. They are not standalone science.

A commenter also state that

Actually Climate Models ARE stand-alone Science. They certainly are not connected to any reality WE live in!!

So before I get to why the above statements are wrong, I want to give some background on Junk Science and creator Steve Milloy. Milloy is basically an industry-funded denier.

Among the topics Milloy has addressed are what he believes to be false claims regarding DDT, global warming, Alar, breast implants, secondhand smoke, ozone depletion, and mad cow disease [source].

Milloy, who debunks global warming concerns regularly, runs two organizations that receive money from ExxonMobil [source]

According to Lisa Gonzalez, manager of external communications for Altria, the parent company of Philip Morris, Milloy was under contract there through the end of last year. “In 2000 and 2001, some of the work he did was to monitor studies, and then we would distribute this information within to our different companies,” Gonzalez said. Although she couldn’t comment on fees paid to Milloy, a January 2001 Philip Morris budget report lists Milloy as a consultant and shows that he was budgeted for $92,500 in fees and expenses in both 2000 and 2001. [source]

He’s also a Fox contributor and works with the libertarian conservative Cato Institute. As Naomi Oreskes writes in “Merchants of Doubt,” Milloy’s goal is to “freely attack science related to health and environmental issues. It didn’t matter who had done the work … [i]f the results challenged the safety of a commercial product, Milloy attacked them.”

According to his website,

Mr. Milloy is a biostatistician and securities lawyer who has also been a registered securities principal, investment fund manager, non-profit executive, and a print/web columnist on science and business issues.

So as a biostatistician, Milloy should know more about models.

Now arguably some of this rests with me because I didn’t discuss the model more or specify the type model. There are some different types of models in science and each seeks to address the world differently.

- Null models: These models ask mathematically what would occur in the absence of a variable of interest. Say I’m interested in what controls the diversity of snails. My hypothesis is that as food increases you get higher diversity of snails. I would create (and have) a model that includes everything but food to see what pattern would emerge. If my null model without food yields a different answer than the patterns than I find in the real world then this supports my hypothesis. If on the other hand, my null model without food modelled in shows a similar pattern to what I find between snail diversity and food then it doesn’t support my hypothesis. Why? Because the pattern can emerge from processes not even related to food as shown by my model.

- Exploratory models: I find an intriguing pattern but I don’t know exactly why it arises. I dump everything I know about the system into a set of equations. I vary different parameters of the model in each trial run. Say in the model above I add food but in one trial I model in lots of food and in another little. In other trials I alter temperature or maybe aspects of the biology of snails like growth and reproduction. I keep doing this over and over. This tells me a lot about how complex interactions between variables can create different patterns. In addition, if the results start to match a pattern in the real world, this gives some insights into what might be occurring naturally. So for example, I can predict the amount of dispersal by larvae versus environmental need of an average adult clam that would be needed to generate patterns of diversity across the Atlantic Ocean.

- Statistical predictive models: These find the best mathematical equations to fit real world data. They are built up from data and test against other data. The best models (of any type) are always based on data, make multiple predictions, and tested with a whole variety of new real data. In the simplest case, this model could be a simple linear regression in which we predict one variable (snail diversity) as a function of another (temperature). Diversity=1.2+2.3(Temp). The more knowledge we enter into the model the better the fit of the model to the data will be. In the most complicated case, you have oceanographic models that pull into them a multitude of data streams, equations, and scientific knowlege. These are able to predict, with high accuracy, anything from currents to temperature to salinity to phytoplankton blooms and much more.

With any model, if it performs poorly then it is chucked. Why would we keep a crappy model? Indeed, if a model is crap I would publish a paper saying its crap, because hell I can always use another good publication on my CV.

Like I mentioned before, I didn’t state what kind of model the ORAS4 is. It is number three. Magdalena Balmaseda, the lead author of the study, had this to say in an email

ORAS4 uses the similar (if not the same) subsurface ocean observations of temperature as other observational data sets such as the NOAA reconstruction of Levitus. In addition ORAS4 uses also sea level observations from altimeter, salinity profiles, and sea surface temperature. The dynamical ocean model + surface winds are only used for interpolation of those observations. Arguably, this interpolation method in ORAS4 is more sophisticated that the one used in more traditional estimates. It offers much better temporal resolution, and event such as El Niño are detectable.

So to rephrase, ORAS4 uses not only observations from the Levitus dataset including temperature, salinity, oxygen, phosphate, nitrate, silicate but as well as more data on sea surface height, salinity, and temperature. Hell the model even builds in currents and winds for shits and giggles and accounts from biases related to changes in ocean observing systems. This model is the Borg of oceanographic models, it assimilates all the data.

So what does ORAS4 do? Well it predicts a lot of things accurately!

- It predicts El Nino/La Nina cycles

- It predicts the occurrence of three major volcanic events that spewed ash into the atmosphere and caused cooling of the oceans

- The warming pattern that emerges in the model in the last decade above 700 meters is comparable with actual measured temperature in the ocean.

To suggest that the findings aren’t real because it’s based on model is disingenuous at best and outright deceit at the worst—especially by someone who states they are statistician. Or maybe Milloy was confused and thought I was talking about runway models which do have a rather poor track record of predicting oceanographic patterns.

Share the post "You’re Right, Runway Models Don’t Predict Ocean Temperature Very Well."

Maybe as a registered securities principal and investment fund manager, he is confusing economic modelling with scientific modelling…

I like posts that serve to debunk and educate, you’ve threaded the needle nicely.

So then, this Milloy dude obviously has no conscience and is a well paid liar for harmful corporate enterprises.

That’s the common method of deniers of science, they just forge a sentence that seems to makes sense, do a lot of noise, and they win. Scientist loose, because they don’t do a lot of noise and generaly need long and more difficult to understand sentence to debunk the fallacious claim.